Legal Spec Breakdown - LSB

Turning legal deconstruction into a scalable human + AI workflow

At a glance

Challenge

Legal deconstruction was manual, inconsistent, and handled outside the product ecosystem, making it difficult to scale as law volume and complexity increased.

Approach

I defined the product and workflow model for LSB, translating a high-stakes legal process into an AI-assisted system that balanced automation with human judgment across Validate, Create Legal Spec, and Interpret.

Impact

Established a more structured, scalable approach to legal deconstruction, validated through successful pilots, early AI usefulness signals, and a stronger foundation for future automation.

Why this mattered

What looked like a manual workflow problem was actually a system design problem. Legal teams were deconstructing laws outside the product ecosystem, using inconsistent practices that made the process difficult to scale, hard to track, and vulnerable to variation in quality.

As law volume and complexity increased, solving this required more than automation. It required defining how AI and humans should collaborate in a domain where consistency, defensibility, and judgment matter.

At stake

Scalability: Manual deconstruction could not keep pace with growing law volume and complexity.

Consistency: Non-standard practices increased variation across interpretations and downstream workflows.

Defensibility: Legal outputs needed to be clear, traceable, and grounded enough to withstand scrutiny.

Operational leverage: Without a productized system, progress remained difficult to measure and improve over time.

What I led

Defined how AI and humans should collaborate across the legal deconstruction workflow

Translated research insights into design principles, workflow structure, and boundaries between human and AI responsibility

Helped shape prompt strategy and evaluation criteria using a research-based failure mode ontology

Designed a flexible system that supported defensibility, iteration, and legal judgment without over-standardizing the process

Three strategic moves

Move 1 - Modeled human + AI collaboration

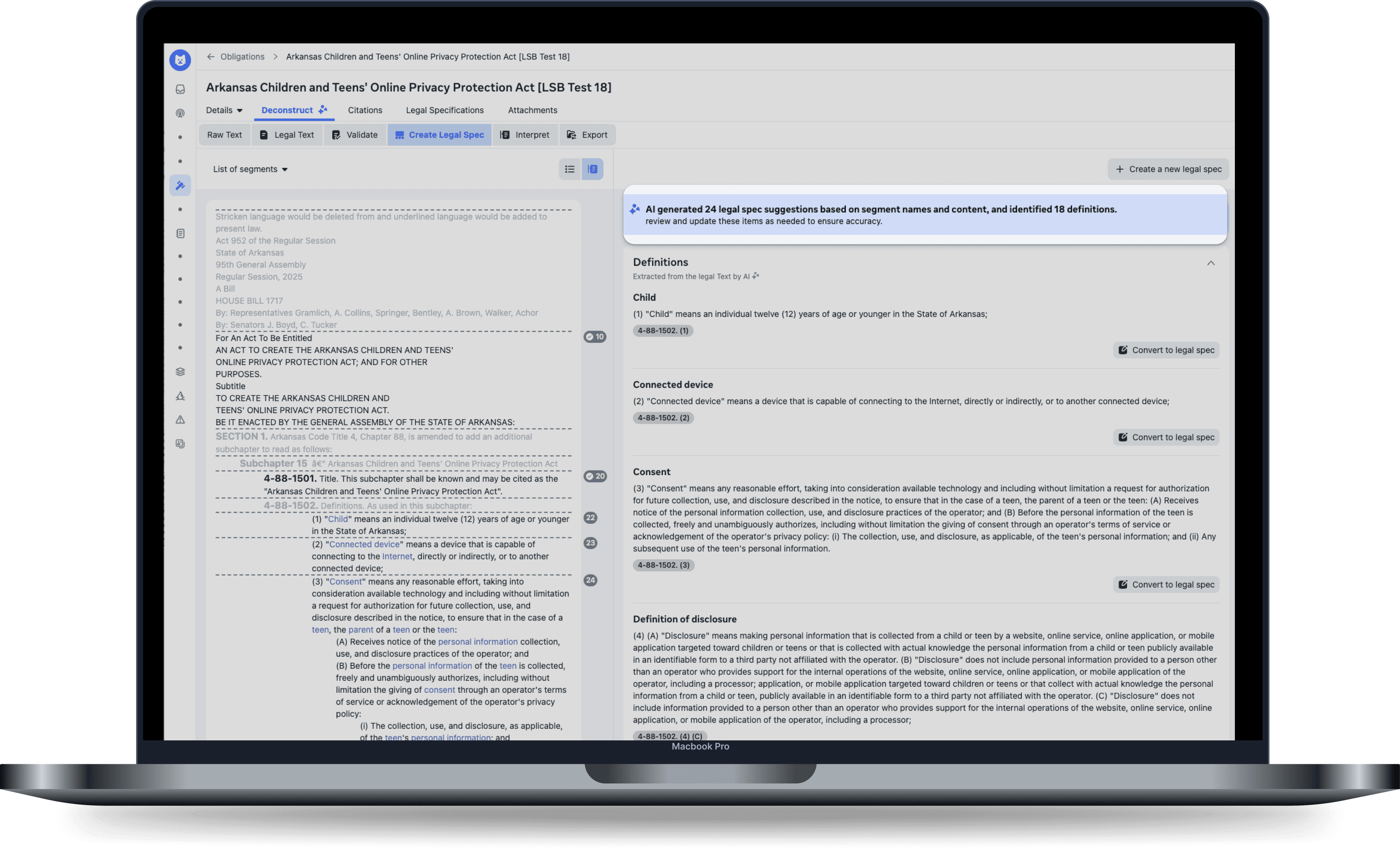

I structured the system around three modes — Validate, Create Legal Spec, and Interpret — each with a different balance of automation, review, and authorship.

Move 2- Used research to shape the product and AI system

Research informed not only the UX, but also the prompt strategy, evaluation structure, and failure modes used to assess interpretation quality.

Move 3- Designed for defensibility, not rigid automation

Because this work was still early-stage, the strongest proof points were around workflow readiness, pilot validation, and AI usefulness rather than scaled business metrics.

Impact

This work was earlier-stage than a mature consumer launch, so the strongest proof points were around workflow readiness, pilot validation, and AI usefulness rather than large-scale business metrics.

Early proof of value

6/6 laws were successfully deconstructed end-to-end using LSB, meeting pilot success criteria

AI suggestions were rated as more helpful than neutral across key legal tasks including validation, legal spec creation, relevancy, and interpretation

The product established a clearer and more measurable system for legal deconstruction than the prior manual process

Research, prompt strategy, and evaluation work created a stronger foundation for future AI maturity and downstream automation